Tales from the jar side: GPT-5 from Java, gpt-oss, More theme song experiments, and the usual social media gags

I replaced my rooster with a duck. Now I wake up at the quack of dawn. (rimshot)

Welcome, fellow jarheads, to Tales from the jar side, the Kousen IT newsletter, for the week of August 2 - 10, 2025. This week I taught Spring AI on the O’Reilly Learning Platform and a Beginning Java course to a private client.

GPT-5 Released

The big AI news this week (or at least one of the big news items) was the release of the GPT-5 model from OpenAI. More properly, that should be called the GPT-5 models, plural, because when you submit a question, GPT-5 includes a router that figures out which model to use under the hood and selects it for you. Experts are not necessarily wild about that, but it might help simplify life for regular people.

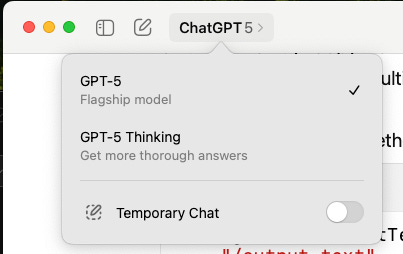

If you use the app, you’ll see they eliminated all the other options:

As you might imagine, that caused a bit of controversy right away. People get very attached the to models they like, which all seem to have slightly different personalities. That’s not really treating the models like they were human — it’s deliberate, and related to the post-training stages the company takes. Models can be overly friendly to the point of being obsequious, or a bit too professional, or dry, or a few other characteristics, even though those seem to change over time.

At any rate, the pushback against removing all the old models was large enough that they re-enabled GPT-4o, but I don’t see it in my app, and I’m supposedly up to date.

The response to the new GPT-5 models from the community was decidedly mixed. Some people really liked them, especially because they felt faster than normal. Others were disappointed that GPT-5 is definitely not a major leap in capabilities. To them, it feels more like an incremental change.

So far, my impression is that it’s good, but I haven’t really pushed it yet. It successful accomplished the coding tasks that I asked it to do, but I live on Claude Code, and Claude Code only works with Claude models. I tried out GPT-5 in Junie, but ran into problems unrelated to the underlying model.

(That’s got me a bit worried, btw. I have to teach a Junie course in the middle of September, and I’m still having big issues with the product. I guess I’ll keep working on it.)

Of most interest to me was whether I could access the new models in the programmatic API. If you look at the web page talking about the coding model, you’ll see that first of all, there are three versions available:

gpt-5, the one that does everything

gpt-5-mini, which they call “cost optimized”, and

gpt-5-nano, for “high throughput” tasks

That’s good for me, though, because it means I can use the cheap model for tests while I figure out how best to use the new models at all. And nano really is cheap. All the reviewers agreed that the nano model is by far the least expensive model around that still does a decent job.

Programmatic access

As far as calling it programmatically, here’s their curl sample:

curl --request POST

--url https://api.openai.com/v1/responses

--header "Authorization: Bearer $OPENAI_API_KEY"

--header 'Content-type: application/json'

--data '{

"model": "gpt-5",

"input": "How much gold would it take to coat the Statue of Liberty in a 1mm layer?",

"reasoning": {

"effort": "minimal"

}

}'There are two items of note in that block. First, they really want developers to use that “responses” endpoint, which they’ve been pushing for a while as a replacement for the old completions endpoint. Second, they added a reasoning block, which lets you specify the effort the model should use (valid values are minimal, low, medium, and high).

That means the existing Java frameworks, Spring AI and LangChain4j, are going to have to change if they want to let you specify the reasoning property. One feature of the frameworks is that they’ve already mapped the input and output JSON structures to Java classes, and now those classes have to be updated.

I’m not one to wait around for that, however. I spent way more time than I should have on a new Spring Boot project, called SpringGPT5, which uses both the traditional Spring AI approach (meaning I can’t say anything about reasoning) and a direct call with Spring’s RestClient and JSON parsing classes. I’m hoping to make a video about it*, but here are the major points I learned:

You can specify the model as gpt-5 (or one of the smaller ones) and it works, but that model does not support the

temperatureparameterTo get around that, you can specify a

temperature = 1.0, which seems high, but apparently that’s what it wants to see.

*I know I’ve been saying that a lot. I really do have several videos I’d like to finally produce and upload.

That worked with the current version of Spring AI, so that was the simple approach. Specifically, you can just go into the application.properties file and add:

spring.ai.openai.api-key=${OPENAI_API_KEY}

spring.ai.openai.chat.options.model=gpt-5-nano

spring.ai.openai.chat.options.temperature=1.0That does the trick. Again, you can’t do anything with the reasoning issue, or process the newly added output properties.

To do things manually, I used a RestClient and a Java text block to send a POST request to the LLM, and then used Jackson’s ObjectMapper to parse the resulting data. Here’s the important part, without all the error handling and validation stuff:

The key is to tell the RestClient you want the body back as a JsonNode. After that it’s a question of walking the tree to find the response values you want.

The code got more involved than I intended, but I wanted everything to be tested and robust and all those other motherhood-and-apple-pie words. If you want to see the results, they’re in this GitHub repository.

Everything was built using GitHub Actions on each commit, and I managed to tie SonarCloud to it for code quality measurements. There are currently no issues at all, which is not easy to do with SonarCloud. We’ll see how long that lasts.

Codex CLI pain

As far as agents go, I did try briefly to use OpenAI’s Codex CLI, which was a massively frustrating experience. They’ve really locked that down recently. It was hard enough just to get it to read the files in the current project (!), and everything I asked it to do took forever. Heck, just to get it to use my regular account, you have to remember to unset the OPENAI_API_KEY variable in that shell (that’s seriously annoying) and to get out of read-only mode you have to start it up with:

> codex -—sandbox=workspace-write —-ask-for-approval=on-request —-model=gpt-5

so that’s fun. Or, rather, the opposite of fun. I never used to have to do all that. I think there’s a config file I can set up that will take care of it all for me, but yikes. I’m sure this is all done for your protection (implying I should be grateful), but hey, I’m running on my own machine, and to use Claude Code all I have to do is say “claude” and I’m up and running.

I have no idea what the OpenAI people are thinking. They’ve practically made the tool unusable, and again I have a class coming up in the Fall where I’m supposed to teach this stuff. As the Chinese curse goes, “May you live in interesting times.”

Also Released: gpt-oss and an Ollama UI

Two days before the release of GPT-5, OpenAI finally returned to its roots and released a couple of open-weights models.

The models are called gpt-oss-20b and gpt-oss-120b, where the oss supposedly stands for open source, but everybody knows that’s not true. These are open weights models, meaning all they give you are the weights in the underlying neural network rather than the training data, full source code, and other artifacts expected of true open source projects. Still, this is the first time OpenAI has released even the weights since GPT-2, so that’s something.

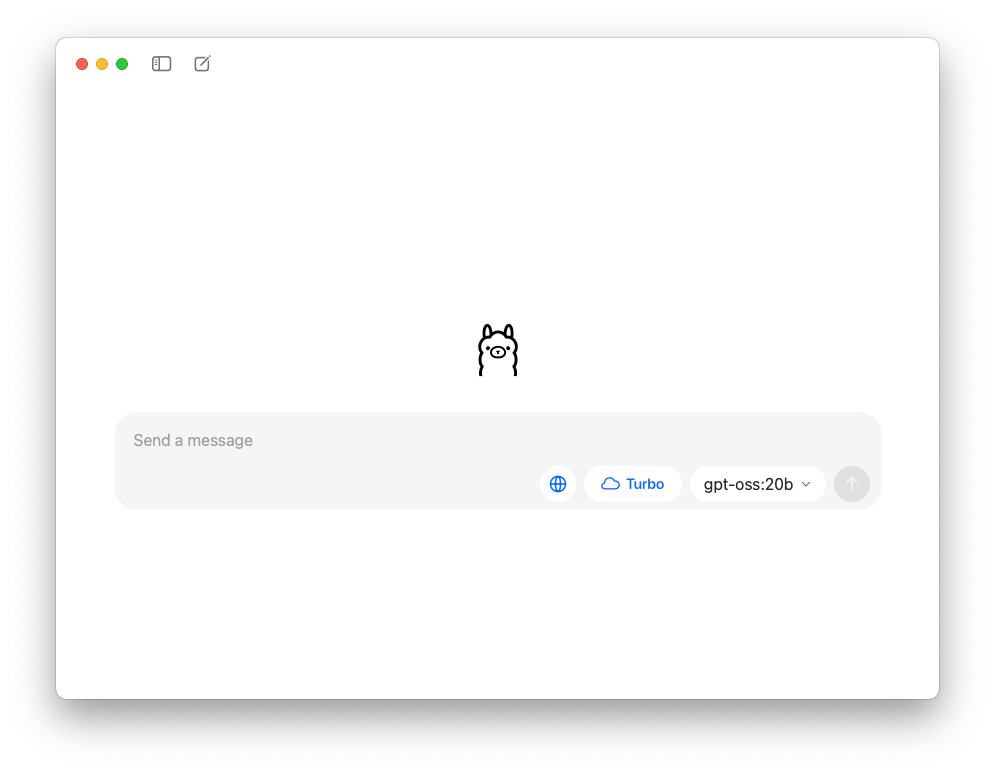

The Ollama people, whose framework allows you to run LLMs on your local machine, released a UI for the first time and supported the models, as well as others you’ve downloaded:

I have enough RAM on my machine to run the 20b parameter model, and if you click the Turbo button, this thing is FAST. I mean warp speed fast. I’m getting decent answers back in under a second, literally, and the answers are surprisingly good. I’d like to try out their 120b parameter model, but they say that model requires at least 80 gigs of RAM (whoa), and that’s way beyond what I can do.

They now have a subscription service that let’s you run on their cloud, and it also provides many more models. I don’t think I can justify another $20/month subscription, though, so I’m going to wait on that for the time being. Still, the performance is impressive enough I may have to play with this some more, especially because what I’m doing is still free.

More Theme Song Experiments (and a Playlist)

The past couple of weeks I’ve mentioned the attempts I’ve been making with Suno AI to generate a theme song for the Tales from the jar side newsletter and YouTube channel. I had GPT-4.1 generate the lyrics, and then asked Suno to create songs in various styles.

Here are a couple more interesting attempts. First, I asked for a song that sounded like the theme to a spy movie from the 1960s. The result isn’t that, but is pretty good anyway:

Next I asked for a sexy bossa nova singer and got this:

This is what I got when I asked for a 90’s boy band:

Kind of ends in the middle of a phrase, or maybe that’s just me.

Finally, this experiment was not entirely successful, but for my Indian friends I decided to ask for a song that could reasonably be used as the dance music for a scene in a Bollywood movie. It unfortunately came out too electronic, but it has the notable feature that of all the songs I’ve generated, it’s the only one where the singer pronounces the word “compilers” correctly.

If anyone from India wants to suggest a better prompt, please let me know and I’ll try again.

Since the number of variants is starting to accumulate, I made a playlist of them all at Suno. I made the playlist public, so you should be able to access it without a problem. Let me know if you have any trouble.

I should also mention that ElevenLabs, the company with the best text-to-speech generator, announced its own music generator. I tried it out, but the quality was so much lower than these I’m not going to inflict the result on you. Maybe I’ll try again later. We’ll see.

Social Media Posts

Jim Lovell

Every time I look at his picture, I expect him to look more like Tom Hanks from the movie.

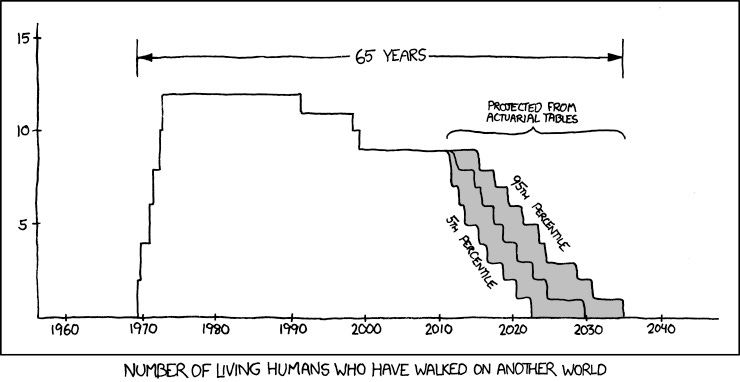

Sadly, whenever we lose another Apollo astronaut, I’m reminded of this XKCD comic:

We’re getting closer to that point where there’s no one left who walked on the Moon. Will we return before that? Heck, with the current administration, I’m not sure we’ll even be here at all by the end of that graph.

American

Sure, but if the waiter is American, nobody would be surprised.

Dinosaurs

Howl, shout, growl, clamor, etc.

Puns

You know what they say: it’s only a murder of crows if you have probable caws.

Little League Dad Joke

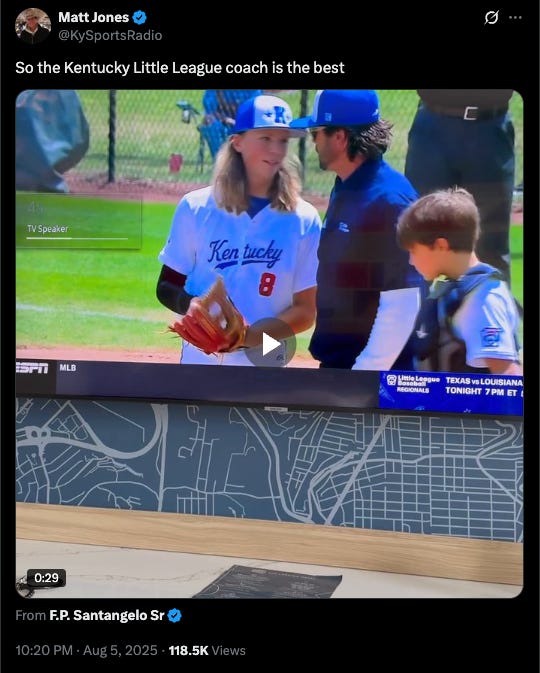

You might have heard about the Little League coach who keeps his players loose by telling Dad jokes and other silliness. This article in The Athletic talks about him. His name is Jake Riordan, and he’s the coach of a group of neighborhood kids from the Lexington Eastern Little League. He’s had them warm up for an inning by pretending to have a baseball while they throw it around the infield, or playing touch football between innings while wearing baseball gloves, and telling awful Dad jokes.

This post on Twitter went viral:

They were in a tournament and he thought his pitcher was getting nervous, so he came out to the mound and, according to the article, had the following conversation:

“Do you know that a koala bear is not actually a bear? It’s a marsupial,” Riordan told the kids, who looked perplexed.

“Do you know why a koala bear is a marsupial and not a bear? Does anyone know?”

More blank stares.

“It doesn’t have the koala-fications.”

With that, Riordan turned around and walked back. But not before catching a glimpse of Denton, who seemed properly unimpressed.

Yup, that works. When he got home, his daughter told him he’d gone viral on social media, for the best of reasons.

Nice twist on an old gag

Evil

When I was an engineer, that’s the sort of thing I wanted to build. Also, self-destruct buttons triggered by the computer, complete with countdown timers. I can destroy a computer now easily enough, but to have it go up on queue? Awesome.

Let’s finish with this thought:

Real or Not?

Have a great week, everybody. :)

Last week:

Spring AI, on the O’Reilly Learning Platform

Beginning Java, private class

This week:

Functional Java, on the O’Reilly Learning Platform